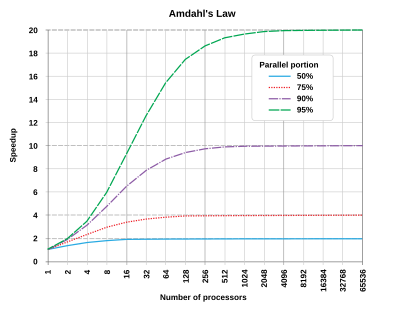

In computer architecture, Amdahl's law (or Amdahl's argument[1]) is a formula which gives the theoretical speedup in latency of the execution of a task at fixed workload that can be expected of a system whose resources are improved.

The law can be stated as:

"the overall performance improvement gained by optimizing a single part of a system is limited by the fraction of time that the improved part is actually used".[2]

It is named after computer scientist Gene Amdahl, and was presented at the American Federation of Information Processing Societies (AFIPS) Spring Joint Computer Conference in 1967.

Amdahl's law is often used in parallel computing to predict the theoretical speedup when using multiple processors. For example, if a program needs 20 hours to complete using a single thread, and a one-hour portion of the program cannot be parallelized, then only the remaining 19 hours' (p = 0.95) execution time can be parallelized. Therefore, regardless of how many threads are devoted to a parallelized execution of this program, the minimum execution time is always more than 1 hour. Hence, the theoretical speedup is, at most, 20 times the single thread performance, .

Universal Scalability Law (USL), developed by Neil J. Gunther, extends the Amdahl's law and accounts for the additional overhead due to interprocess communication. USL quantifies scalability based on parameters such as contention and coherency. [3]

- ^ Rodgers, David P. (June 1985). "Improvements in multiprocessor system design". ACM Sigarch Computer Architecture News. 13 (3). New York, NY, USA: ACM: 225–231 [p. 226]. doi:10.1145/327070.327215. ISBN 0-8186-0634-7. ISSN 0163-5964. S2CID 7083878.

- ^ Reddy, Martin (2011). API Design for C++. Burlington, Massachusetts: Morgan Kaufmann Publishers. p. 210. doi:10.1016/C2010-0-65832-9. ISBN 978-0-12-385003-4. LCCN 2010039601. OCLC 666246330.

- ^ Gunther, Neil (2007). Guerrilla Capacity Planning: A Tactical Approach to Planning for Highly Scalable Applications and Services. ISBN 978-3540261384.