| Part of a series on |

| Machine learning and data mining |

|---|

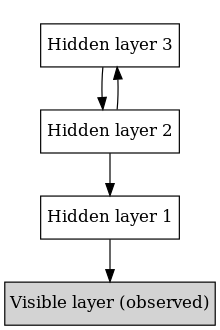

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not between units within each layer.[1]

When trained on a set of examples without supervision, a DBN can learn to probabilistically reconstruct its inputs. The layers then act as feature detectors.[1] After this learning step, a DBN can be further trained with supervision to perform classification.[2]

DBNs can be viewed as a composition of simple, unsupervised networks such as restricted Boltzmann machines (RBMs)[1] or autoencoders,[3] where each sub-network's hidden layer serves as the visible layer for the next. An RBM is an undirected, generative energy-based model with a "visible" input layer and a hidden layer and connections between but not within layers. This composition leads to a fast, layer-by-layer unsupervised training procedure, where contrastive divergence is applied to each sub-network in turn, starting from the "lowest" pair of layers (the lowest visible layer is a training set).

The observation[2] that DBNs can be trained greedily, one layer at a time, led to one of the first effective deep learning algorithms.[4]: 6 Overall, there are many attractive implementations and uses of DBNs in real-life applications and scenarios (e.g., electroencephalography,[5] drug discovery[6][7][8]).

- ^ a b c Hinton G (2009). "Deep belief networks". Scholarpedia. 4 (5): 5947. Bibcode:2009SchpJ...4.5947H. doi:10.4249/scholarpedia.5947.

- ^ a b Hinton GE, Osindero S, Teh YW (July 2006). "A fast learning algorithm for deep belief nets" (PDF). Neural Computation. 18 (7): 1527–54. CiteSeerX 10.1.1.76.1541. doi:10.1162/neco.2006.18.7.1527. PMID 16764513. S2CID 2309950.

- ^ Bengio Y, Lamblin P, Popovici D, Larochelle H (2007). Greedy Layer-Wise Training of Deep Networks (PDF). NIPS.

- ^ Bengio, Y. (2009). "Learning Deep Architectures for AI" (PDF). Foundations and Trends in Machine Learning. 2: 1–127. CiteSeerX 10.1.1.701.9550. doi:10.1561/2200000006.

- ^ Movahedi F, Coyle JL, Sejdic E (May 2018). "Deep Belief Networks for Electroencephalography: A Review of Recent Contributions and Future Outlooks". IEEE Journal of Biomedical and Health Informatics. 22 (3): 642–652. doi:10.1109/jbhi.2017.2727218. PMC 5967386. PMID 28715343.

- ^ Ghasemi, Pérez-Sánchez; Mehri, Pérez-Garrido (2018). "Neural network and deep-learning algorithms used in QSAR studies: merits and drawbacks". Drug Discovery Today. 23 (10): 1784–1790. doi:10.1016/j.drudis.2018.06.016. PMID 29936244. S2CID 49418479.

- ^ Ghasemi, Pérez-Sánchez; Mehri, fassihi (2016). "The Role of Different Sampling Methods in Improving Biological Activity Prediction Using Deep Belief Network". Journal of Computational Chemistry. 38 (10): 1–8. doi:10.1002/jcc.24671. PMID 27862046. S2CID 12077015.

- ^ Gawehn E, Hiss JA, Schneider G (January 2016). "Deep Learning in Drug Discovery". Molecular Informatics. 35 (1): 3–14. doi:10.1002/minf.201501008. PMID 27491648. S2CID 10574953.